LangChain Alternatives in 2026: Beyond the Hype

Looking for LangChain alternatives? Discover the top frameworks for building powerful AI agents in 2026. Compare performance, flexibility, and cost.

10 min read

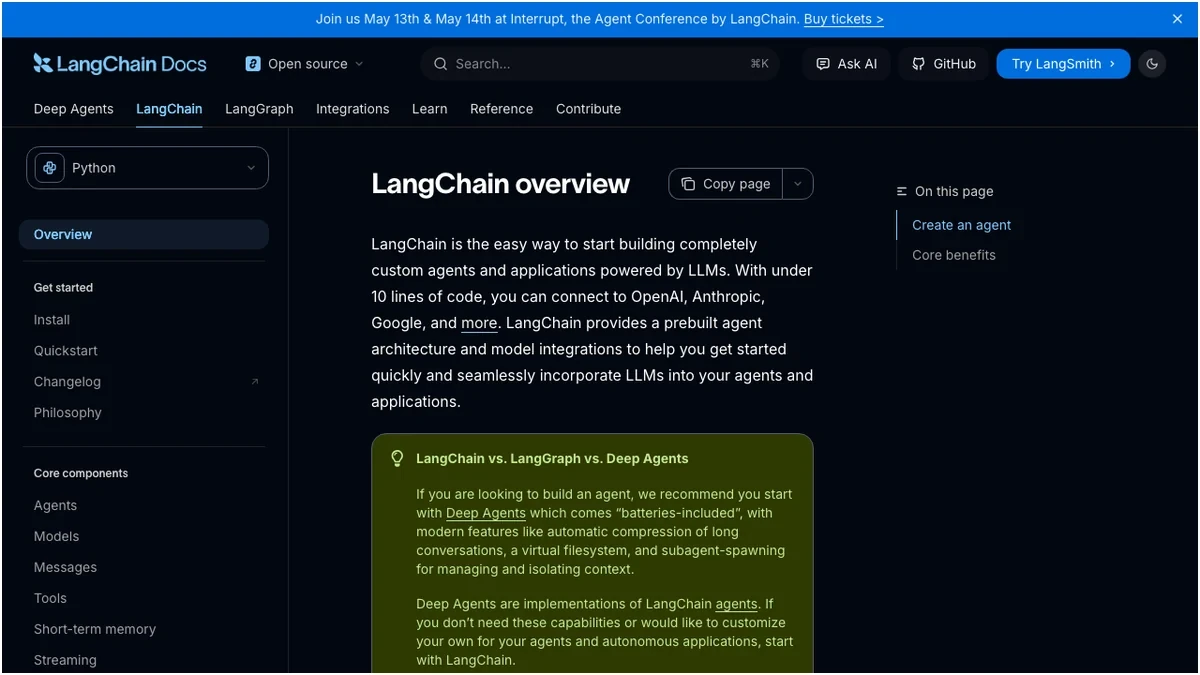

The landscape of AI agent development is evolving at breakneck speed. While LangChain has been a dominant force, 2026 presents a mature market with powerful alternatives that address its perceived limitations. Developers are increasingly seeking frameworks that offer enhanced performance, greater flexibility, and more predictable cost structures. This deep dive explores the leading LangChain alternatives, empowering you to make an informed decision for your next AI project.

The Shifting Sands: Why Look Beyond LangChain?

LangChain's initial success stemmed from its comprehensive suite of tools for chaining LLM calls, managing prompts, and integrating with various data sources. However, as AI applications become more complex and production-critical, certain pain points have emerged:

- Complexity and Abstraction: LangChain's extensive abstraction layers can sometimes obscure underlying mechanisms, making debugging and fine-tuning challenging.

- Performance Bottlenecks: For high-throughput or low-latency applications, LangChain's overhead can become a significant factor.

- Cost Predictability: The dynamic nature of LLM calls orchestrated by LangChain can lead to unpredictable operational costs, especially at scale.

- Evolving Ecosystem: The rapid pace of LLM development means frameworks need to be exceptionally agile to keep up with new models and techniques.

These challenges have paved the way for a new generation of frameworks, each with its unique strengths.

Top LangChain Alternatives for 2026

We've evaluated several prominent frameworks based on their feature sets, performance benchmarks, community support, and overall developer experience.

1. LlamaIndex (formerly GPT Index)

LlamaIndex has rapidly ascended as a formidable contender, particularly for data-centric AI applications. Its core strength lies in its sophisticated data indexing and retrieval capabilities, making it ideal for building agents that need to interact with large, complex datasets.

Key Features:

- Advanced Data Connectors: Seamless integration with a vast array of data sources, including databases, APIs, and cloud storage.

- Sophisticated Indexing Strategies: Supports various indexing methods (vector, keyword, graph) for optimized data querying.

- Query Engines: Powerful tools for natural language querying of indexed data, enabling agents to extract precise information.

- Agentic Workflows: Facilitates the creation of agents that can autonomously retrieve, process, and act upon data.

- Modular Design: Offers flexibility in choosing components for data ingestion, indexing, and querying.

Pros:

- Exceptional for RAG (Retrieval Augmented Generation) applications.

- Strong focus on data management and retrieval efficiency.

- Active and growing community.

- Good performance for data-intensive tasks.

Cons:

- Can be more data-focused than general-purpose orchestration.

- Steeper learning curve for advanced indexing techniques.

2. CrewAI

CrewAI has emerged as a leader in orchestrating multi-agent systems. It simplifies the process of defining roles, tasks, and communication protocols between AI agents, fostering collaborative AI workflows.

Key Features:

- Agent Orchestration: Designed from the ground up for managing multiple interacting agents.

- Role-Based Agents: Allows for defining agents with specific roles, tools, and backstories.

- Task Management: Intuitive system for assigning and tracking tasks for individual agents and agent teams.

- Tool Integration: Easy integration of custom tools and LLM functionalities.

- Collaborative AI: Focuses on enabling agents to work together to achieve complex goals.

Pros:

- Excellent for building sophisticated multi-agent systems.

- Intuitive API for defining agent interactions.

- Promotes modularity and reusability of agent components.

- Strong emphasis on collaboration and emergent behavior.

Cons:

- Less focused on raw data indexing compared to LlamaIndex.

- May require more explicit setup for single-agent applications.

3. AutoGen

Microsoft's AutoGen offers a flexible framework for building LLM applications using multiple agents that can converse with each other. It emphasizes a conversational approach to agent interaction, allowing for dynamic task delegation and problem-solving.

Key Features:

- Multi-Agent Conversations: Agents can communicate and collaborate through chat-like interactions.

- Flexible Agent Configuration: Supports various agent types, including human-in-the-loop agents.

- Tool Use: Enables agents to leverage external tools and APIs.

- Code Execution: Facilitates agents that can write and execute code for complex tasks.

- Customizable Workflows: High degree of control over agent behavior and interaction patterns.

Pros:

- Powerful for complex problem-solving through agent collaboration.

- Supports human-in-the-loop scenarios effectively.

- Open-source and backed by Microsoft.

- Good for research and experimentation with agentic systems.

Cons:

- Can be more complex to set up and manage for simpler use cases.

- Performance tuning might require deeper understanding of its conversational model.

4. Semantic Kernel

Microsoft's Semantic Kernel is a more general-purpose SDK that bridges the gap between LLMs and traditional programming languages. It allows developers to integrate LLM capabilities into existing applications with ease, focusing on prompt engineering and tool orchestration.

Key Features:

- Prompt Engineering: Robust tools for creating, managing, and executing complex prompts.

- Plugin Architecture: Extensible system for integrating custom functions and services.

- Orchestration: Enables chaining of LLM calls and native functions.

- Memory Management: Support for various memory types to provide context to LLMs.

- Cross-Language Support: Available for C#, Python, and Java.

Pros:

- Excellent for integrating LLMs into existing applications.

- Strong focus on prompt engineering and templating.

- Mature and well-supported by Microsoft.

- Good for building AI-powered features within larger software projects.

Cons:

- Less focused on pure multi-agent orchestration compared to CrewAI or AutoGen.

- May require more boilerplate code for complex agentic workflows.

5. Vellum

Vellum positions itself as a production-focused platform for building and deploying LLM applications. It emphasizes developer experience, version control, and robust deployment pipelines, making it suitable for enterprise-grade solutions.

Key Features:

- Prompt Management: Comprehensive tools for prompt versioning, testing, and deployment.

- LLM Orchestration: Visual editor for designing and managing complex LLM workflows.

- Evaluation and Monitoring: Built-in tools for performance tracking and quality assurance.

- Deployment Pipelines: Streamlined process for deploying LLM applications to production.

- Collaboration Features: Designed for team-based development of AI applications.

Pros:

- Ideal for production deployments and enterprise use cases.

- Strong emphasis on developer experience and workflow management.

- Excellent for prompt versioning and A/B testing.

- Provides robust monitoring and evaluation capabilities.

Cons:

- Primarily a managed platform, which might mean less low-level control than open-source frameworks.

- Pricing can be a consideration for smaller projects or startups.

Feature Comparison: LangChain vs. The Alternatives

To provide a clearer picture, let's compare key features across these frameworks.

Pricing Considerations

The cost of running LLM applications can vary significantly based on the chosen framework and the underlying LLM providers. While most open-source frameworks are free to use, their operational costs depend on your infrastructure and LLM API usage. Managed platforms like Vellum offer tiered pricing.

Here's a general overview of pricing models:

Note: LLM API costs are a significant factor. Frameworks like OpenAI, Anthropic, and Google AI have their own pricing structures that will directly impact your total expenditure. Latency and token usage are key metrics to monitor for cost optimization.

Choosing the Right Framework for Your Needs

The "best" framework is highly dependent on your specific project requirements.

Frequently Asked Questions

Frequently Asked Questions

Sources

- Zapier - LangChain Alternatives

- Gumloop - LangChain Alternatives

- Vellum AI - Top LangChain Alternatives

- Airbyte - Best AI Agent Frameworks 2026

- Scrapfly - Top LangChain Alternatives

- Dev.to - Choosing an LLM in 2026

- Coworker AI - LangChain Alternatives

- Lamatic Labs - LangChain Alternatives Guide

- Hacker Noon - Choosing an LLM in 2026