Replicate vs. Hugging Face: Which AI API is Right for You?

Compare Replicate and Hugging Face AI APIs. Discover key differences, features, and pricing to choose the best platform for your AI projects.

9 min read

Choosing the right platform for deploying your AI models is a critical decision that can significantly impact your project's scalability, cost-efficiency, and developer experience. Two prominent contenders in this space are Replicate and Hugging Face. While both offer powerful solutions for making your models accessible via APIs, they cater to different needs and philosophies.

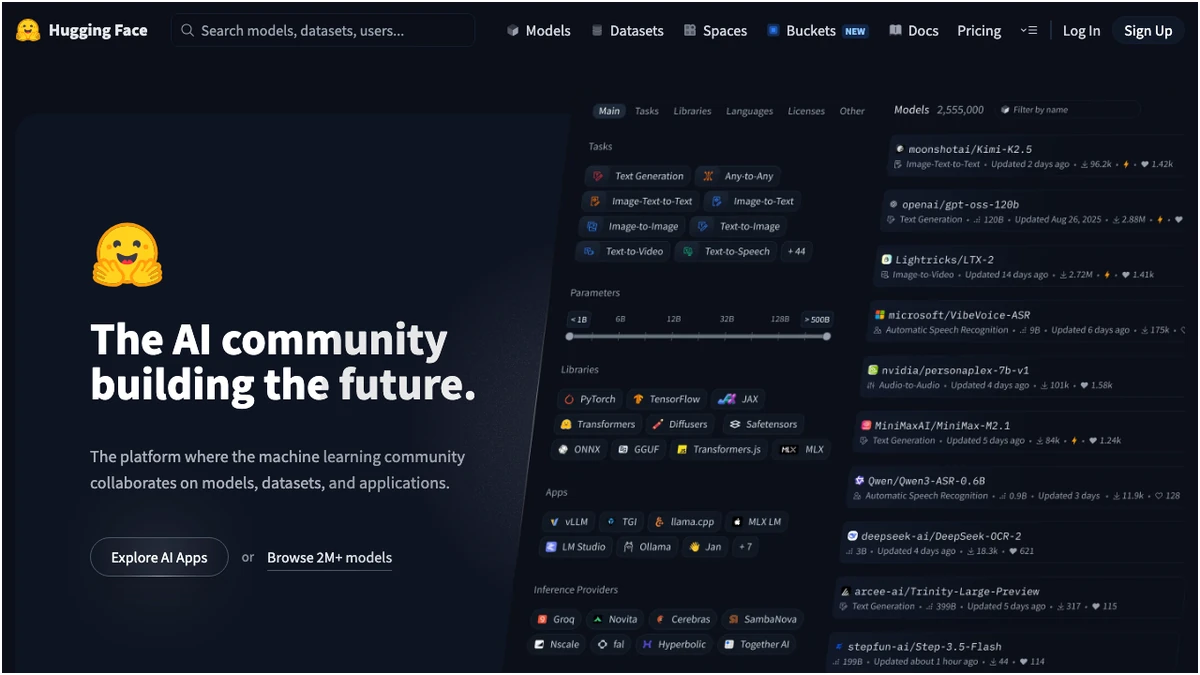

Hugging Face, a titan in the open-source AI community, provides a comprehensive ecosystem for building, training, and deploying models. Replicate, on the other hand, focuses on an API-first, developer-centric approach, emphasizing simplicity and pay-per-second inference. This article dives deep into their offerings to help you make an informed choice.

Core Philosophies and Target Audiences

Hugging Face is built around a vast, collaborative hub for models and datasets, fostering an environment where researchers and developers can share and build upon each other's work. Its strength lies in its breadth, offering tools for the entire ML lifecycle, from experimentation to production. This makes it ideal for teams deeply embedded in the ML ecosystem, requiring flexibility and extensive community support.

Replicate, conversely, is engineered for speed and developer velocity. Its core promise is to abstract away infrastructure complexities, allowing developers to deploy models with a single command and pay only for the compute they consume. This API-first design makes it exceptionally well-suited for applications requiring rapid iteration, bursty traffic, or a seamless integration into existing software stacks without the overhead of managing infrastructure.

Feature Comparison: Replicate vs. Hugging Face

To understand the practical differences, let's break down their key features:

Model Ecosystem

Hugging Face boasts an overwhelming library of over 800,000 models and 100,000 datasets. This makes it the undisputed champion for model discovery and exploration. If you're looking for a niche model or want to experiment with a wide variety of architectures, Hugging Face is your go-to.

Replicate, while not as vast, curates a significant collection of models, with a particularly strong emphasis on generative AI applications like image generation, text-to-video, and audio synthesis. Their focus is on providing production-ready, high-quality models that are easy to deploy.

Deployment and Infrastructure

Replicate's core value proposition is its extreme simplicity. Deploying a model typically involves a single command, and the platform handles all underlying infrastructure. This "no-ops" approach is a massive boon for developers who want to focus on building applications rather than managing servers.

Hugging Face offers more flexibility. Inference Endpoints provide a managed service for deploying models, offering good control and scalability. Spaces allow for hosting interactive demos and applications, with options for self-hosting or using managed hardware. While powerful, these options often involve more configuration and a deeper understanding of deployment strategies compared to Replicate's streamlined process.

Custom Model Deployment

Both platforms allow for the deployment of custom models. Hugging Face's Inference Endpoints offer a robust environment for this, giving you granular control over your deployment. Replicate utilizes the Cog format, a containerization standard for machine learning models. This means you package your model and its dependencies into a Cog container, which Replicate then runs. This approach is efficient and integrates seamlessly with their API-first philosophy.

Pricing: Cost-Effectiveness for Different Workloads

Understanding the pricing models is crucial for long-term cost management.

Hugging Face Pricing Breakdown

Hugging Face offers a freemium model. The free tier provides limited access to their Inference API. For more substantial usage or private repositories, Pro and Team plans offer increased quotas and features.

For production deployments, Inference Endpoints are priced per hour. This means you pay for the compute time your endpoint is active, regardless of whether it's actively processing requests. This can be cost-effective for steady, predictable workloads but can become expensive if your endpoints are idle for significant periods. Spaces Hardware is also billed hourly for hosting demos.

Replicate Pricing Breakdown

Replicate's pricing is fundamentally different: pay-per-second. You are charged only for the actual compute time your model uses when processing a request. This model is highly advantageous for applications with variable or bursty traffic, as you avoid paying for idle time. The base rate starts at $0.000225 per second for both CPU and GPU, though this can vary based on the specific model and hardware requirements. Replicate also offers free credits upon signup, allowing you to experiment without immediate cost.

Pros and Cons: A Balanced View

To summarize the strengths and weaknesses of each platform:

When to Choose Which Platform

The decision between Replicate and Hugging Face hinges on your specific project requirements, team expertise, and traffic patterns.

Choose Hugging Face if:

- You need access to the widest possible range of models and datasets. Discovery is paramount.

- Your team is deeply involved in the ML lifecycle and values integrated tools for training, fine-tuning, and deployment.

- You have steady, predictable inference workloads where per-hour pricing for Inference Endpoints is cost-effective.

- You require granular control over your deployment environment and are comfortable with more configuration.

- Community support and collaboration are key drivers for your project.

Choose Replicate if:

- Simplicity and developer velocity are your top priorities. You want to deploy models with minimal effort.

- Your application experiences variable or bursty traffic. Pay-per-second billing is a significant advantage here.

- You are building applications that require fast, on-demand inference without the overhead of managing infrastructure.

- You are primarily focused on generative AI models and want easy access to production-ready implementations.

- You want to integrate AI capabilities into your application quickly without becoming an infrastructure expert.